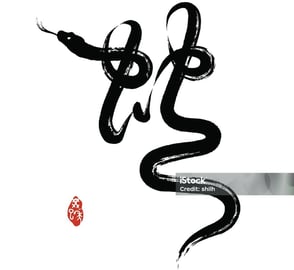

'Chinese Ink Snake' Calligraphy

Trained using Flux LoRA with customised dataset

TYPEFACE DESIGNML PROJECT

Intention

Transform Chinese calligraphy into an ink snake style

Promote traditional aesthetics in modern medium

Approaches

Style generation (Flux LoRA)

Animation (Runway Image-Video)

AR Visuals (Unity ARkit & VFX Graph)

Project Overview

Concept

AI Development

Cost: $50 (Model Training & Testing) + $30 (Runway)

Timeframe: 3.5 weekends

Key Phases:

Style Model Training and Refinement (3-4 days)

Animation (1 day)

Unity AR Development (2 days)

I've always been terrified of snakes, so when the Year of Snake approached, I challenged myself to create art that would transform this fear into something meaningful. While researching Chinese snake paintings online, I had an epiphany - the fluid movement of snakes somewhat mirrors the flow of Chinese characters, and Chinese calligraphy's abstract nature opened up possibilities: what if snakes could become a typestyle for Chinese characters? I then coined this concept "Chinese Snake Calligraphy" where snake forms would adapt to character structures. By focusing on the graceful and abstract flow of Chinese bruch ink rather than realistic snake features, I could create something less intimidating yet culturally resonant. My search results revealed stunning examples of this harmony between snake forms and Chinese calligraphic strokes, see below.

Character "蛇" meaning 'Snake'

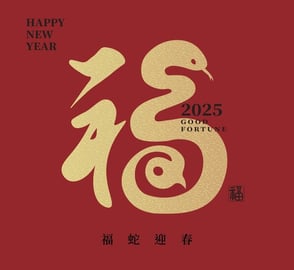

Character "福" meaning 'Fortune'

My goal was straightforward: train a model to transform Chinese calligraphy into ink snake style. Approaches like pix2pix weren't feasible due to limited examples of snake-styled characters. While ControlNet Line Detection can offer precise stroke mapping, it would restrict the artistic freedom needed for abstract interpretations. Therefore, I turned to Flux LoRA* training after consulting with an AI expert who introduced me to fal.ai. Then, studying others' creative processes helped me understand the tool's potential and guided my first steps.

*Flux LoRA combines Flux (an AI image generation model) with LoRA (Low-Rank Adaptation), a technique for fine-tuning AI models, offering a more efficient and accessible way to adapt powerful AI models to create custom artistic styles.

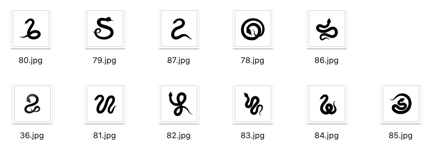

Dataset preparation was undoubtedly the most crucial step. 'Filtering' and 'Categorizing' became my guiding principles. I batch collected ink snake images with white backgrounds from various sources (Shutterstock, iStock, Pinterest), then conducted training iterations while continuously refining the dataset:

1st attempt: Used all snake images of somewhat similar style, results not satisfying

2nd attempt: Filtered to basic snake images without tongues, getting better but snake style wasn't recognizable enough

3rd iteration: Switched to snake images with tongues, achieving more satisfying results

4th iteration: Upscaled and standardized image sizes using AI tools, further enhancing quality

5th iteration: Experimented with different training steps to optimize results

Final refinement: Tested two approaches:

Dataset A: 11 images with identical style (simimar tongue and eye looks)

Dataset B: Dataset A plus additional similar-styled images Dataset A produced more consistent results

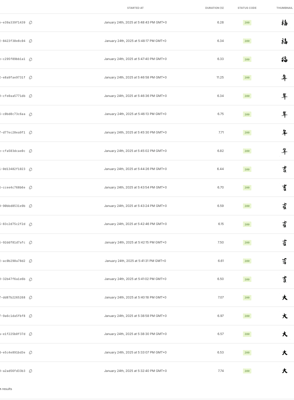

Testing was equally iterative, involving multiple inference tests and parameter adjustments (prompts, scale, seed numbers). To streamline the process, I prepared a testing dataset of 5 Chinese characters on white background. After numerous inference runs, I cataloged the most satisfying outputs. This systematic approach led to a model that achieved 90% of my artistic expectation.

Dataset A

My training history for LoRA models

'Zuck, is that you?'

...

one of my failed attempts

Style Generation

Animation

Takeaways

Iteration and documentation contribute to optimization. Remember to log every training with its settings and parameters.

For Flux LoRA training, quality beats quantity - a small batch of cohesive-styled images outperform a large pool of images with inconsistent styles

Standardizing input image size and quality significantly impacts output consistency.

Having a dedicated testing dataset from the start helps track progress objectively.

Training parameters (steps, learning rate) need careful balance - more isn't always better.

Clear artistic vision helps guide technical decisions - knowing what style you want helps filter datasets effectively.

Moving to animation, I chose Runway Gen-3 Alpha Turbo to bring the snakes to life. Using the Flux outputs as final frames, I prompted "snakes moving around in fluid movement in the center" to mimic the gradual stroke progression of Chinese calligraphy. I used consistent seed numbers to maintain motion coherence. Interestingly, characters with more strokes produced better results than simpler ones like "大 (big)". I am guessing this is because simpler characters have more white space, giving the model too much freedom in motion generation and making it harder to maintain structural integrity. Complex characters provide more visual constraints and anchor points which help the model generate more controlled animations that preserve the character's essence. After multiple iterations, I selected the most successful outputs for final implementation.

Input: Chinese Character '福' (Fortune)

→

→

LoRA Style Transfer: Snake Calligraphy

Runway Image-Video: Dynamic Movement

A snippet of my inference testing

Selected Works

Next Steps

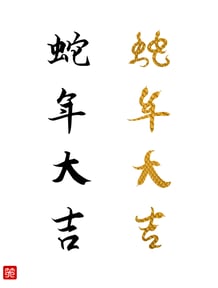

"蛇年大吉 (shé nián dà jí)”– a Chinese New Year blessing meaning “Wish you prosperity in the Year of the Snake”

And... its animated version

Train a new LoRA model for text-to-snake generation, enabling direct text input to generate snake calligraphy

Expand to English Alphabet and create a bilingual snake font system

Make the tool publicly accessible as a web service

I've also brought 'Fortune' to Chinatown and St. Paul's Cathedral